Baseline CNN

Run ID: 20260428_042520

Single-model ResNet50 baseline with a fixed 0.5 threshold.

Cross-dataset skin lesion classification under dataset shift

Results

This page hard-codes the v1 benchmark snapshot from pinned experiment runs. This page gets updated when new runs outperform old runs.

Pinned Runs

Run ID: 20260428_042520

Single-model ResNet50 baseline with a fixed 0.5 threshold.

Run ID: ensemble_ens_20260429_b

Five member folds aggregated via mean malignancy probability.

Run ID: 20260423_105922

Pretrained ViT with warmup and cosine-style learning-rate decay.

Run ID: 20260430_165245

Pretrained larger ViT with warmup and cosine-style learning-rate decay.

Metric Tables

| Model | Accuracy | Precision | Recall | F1 | ROC AUC | PR AUC | Confusion Matrix |

|---|---|---|---|---|---|---|---|

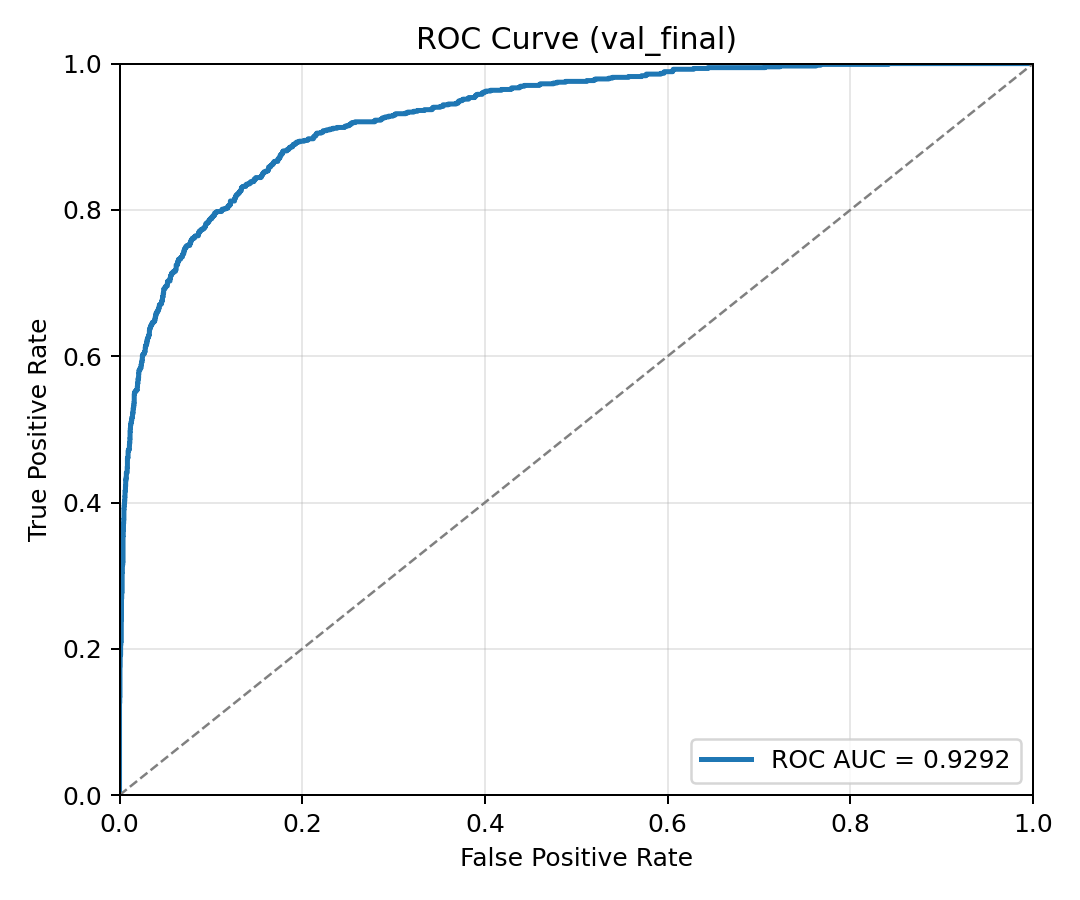

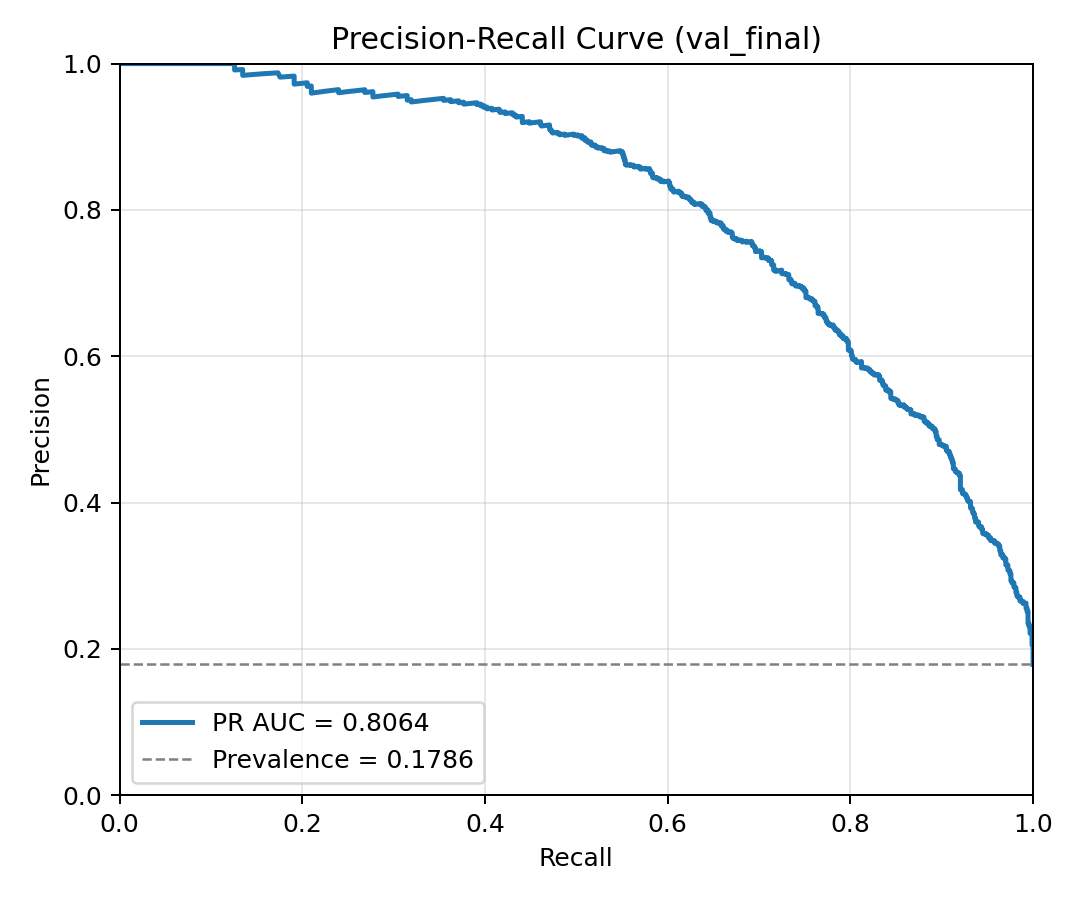

| Baseline CNN | 85.14% | 55.56% | 83.87% | 66.84% | 0.9292 | 0.8064 | TN 3555 | FP 607 | FN 146 | TP 759 |

| Ensemble CNN (5-fold) | 83.41% | 52.78% | 67.07% | 59.08% | 0.8527 | 0.6511 | TN 3622 | FP 543 | FN 298 | TP 607 |

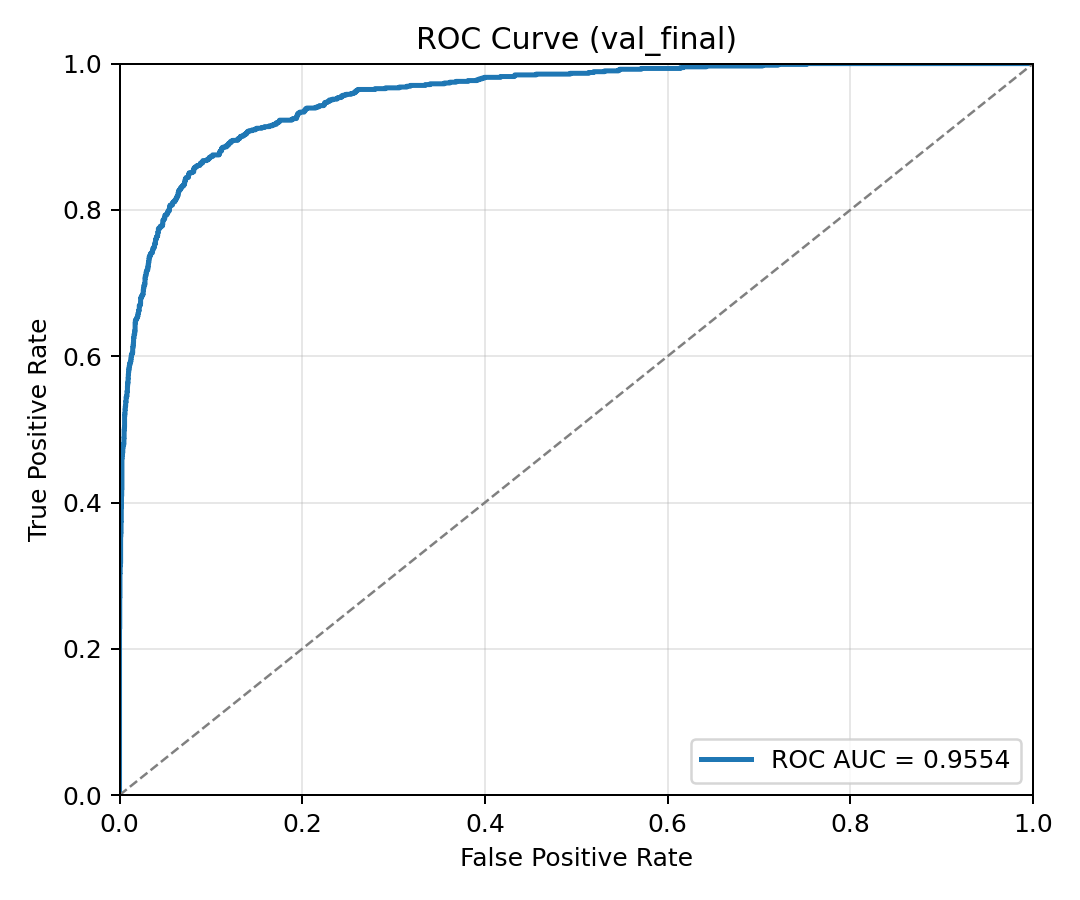

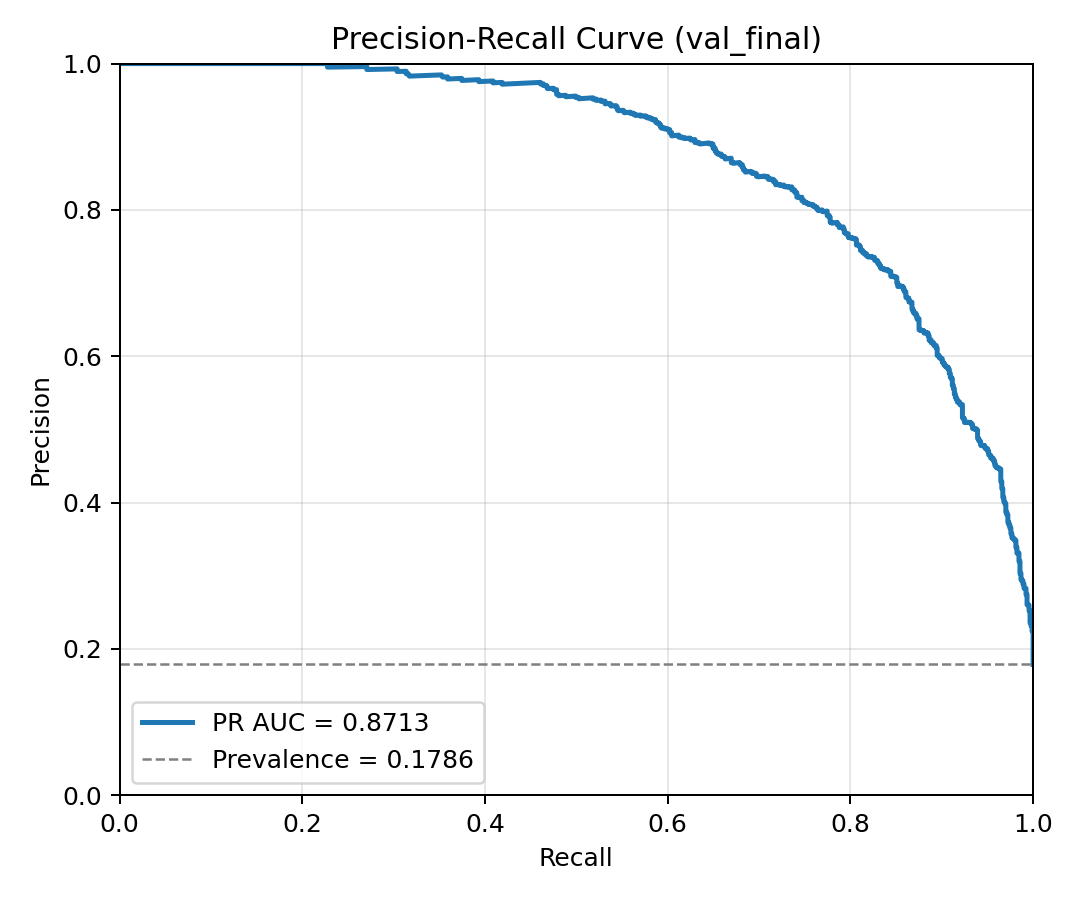

| Vision Transformer (ViT-B16) | 92.40% | 79.82% | 76.91% | 78.33% | 0.9554 | 0.8713 | TN 3986 | FP 176 | FN 209 | TP 696 |

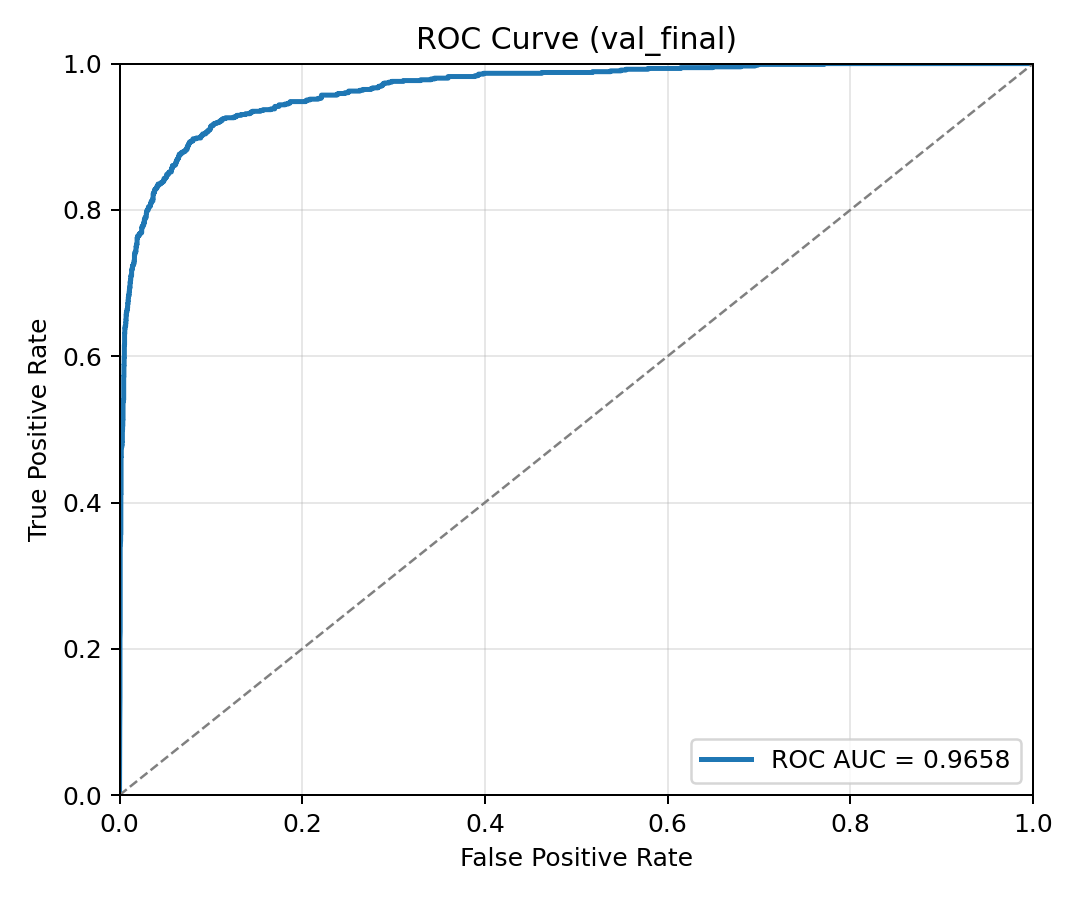

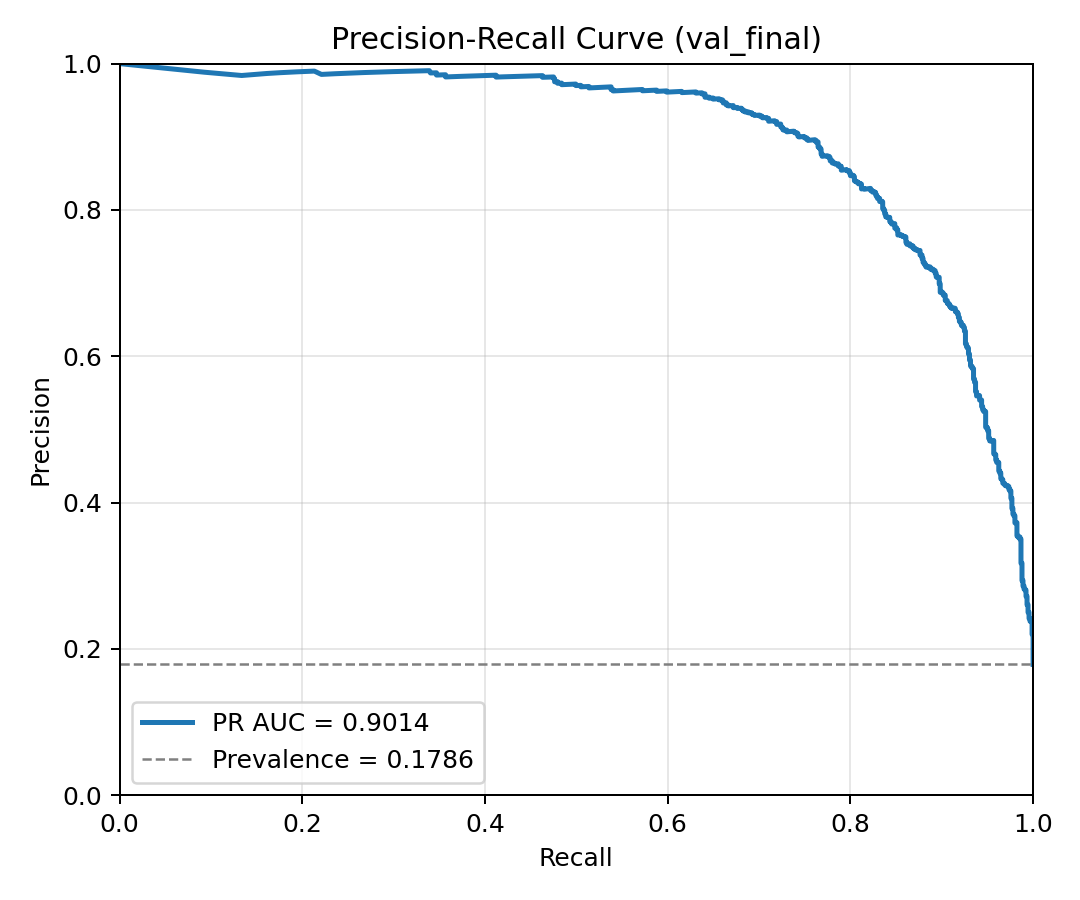

| Vision Transformer (ViT-L16) | 93.86% | 84.45% | 80.44% | 82.40% | 0.9658 | 0.9014 | TN 4028 | FP 134 | FN 177 | TP 728 |

| Model | Accuracy | Precision | Recall | F1 | ROC AUC | PR AUC | Confusion Matrix |

|---|---|---|---|---|---|---|---|

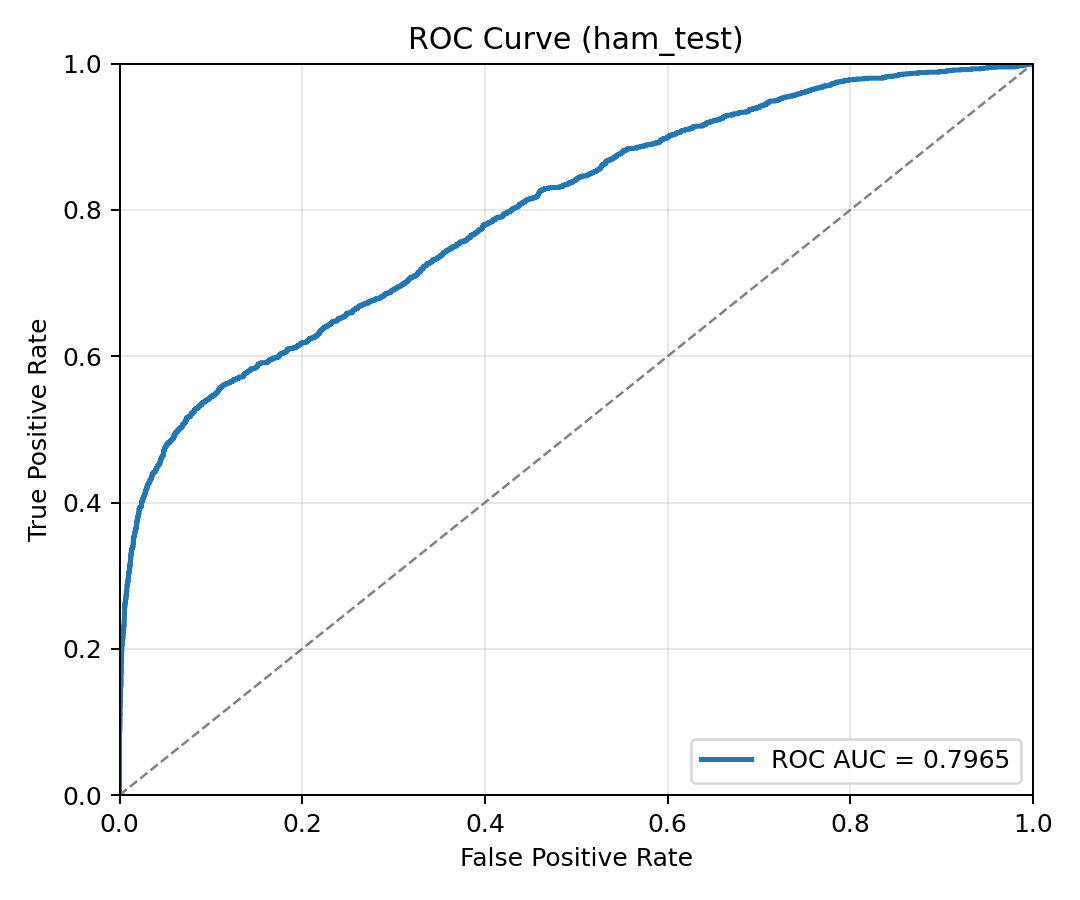

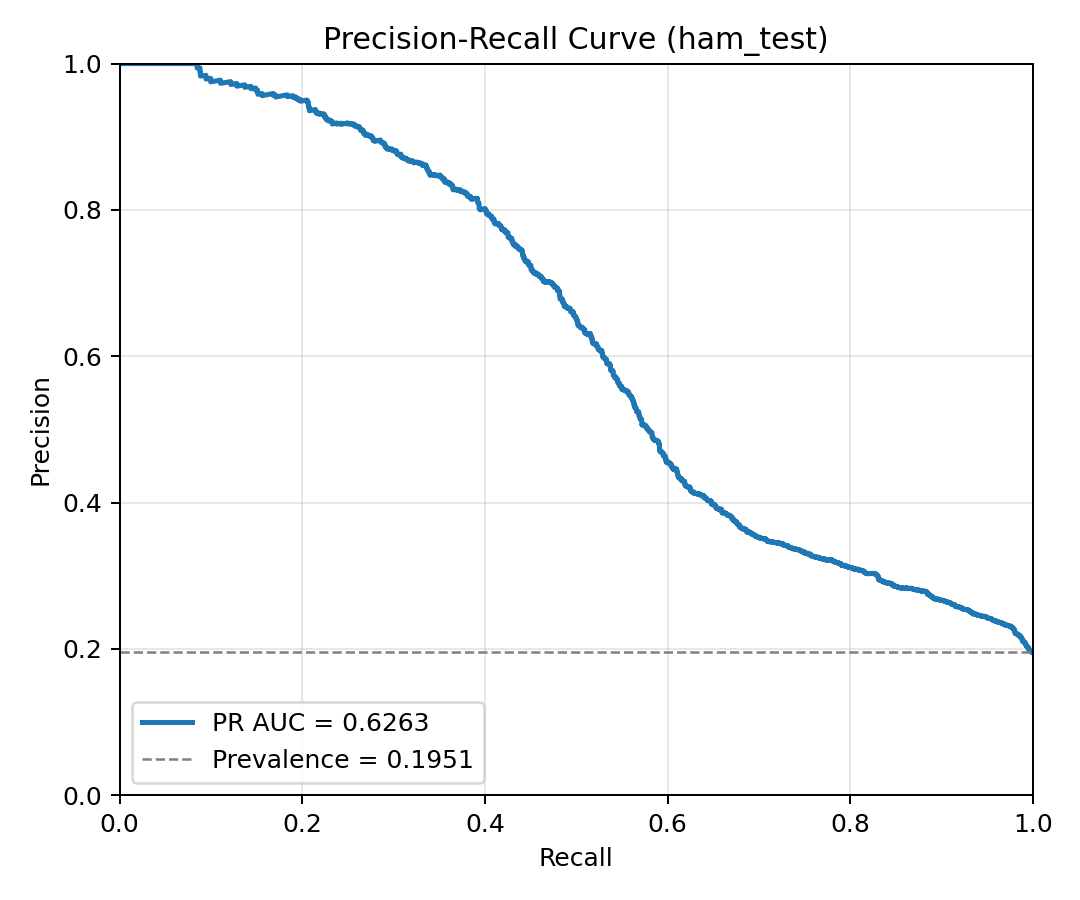

| Baseline CNN | 84.34% | 61.77% | 51.84% | 56.37% | 0.7965 | 0.6263 | TN 7434 | FP 627 | FN 941 | TP 1013 |

| Ensemble CNN (5-fold) | 83.69% | 62.75% | 40.43% | 49.18% | 0.7966 | 0.5739 | TN 7592 | FP 469 | FN 1164 | TP 790 |

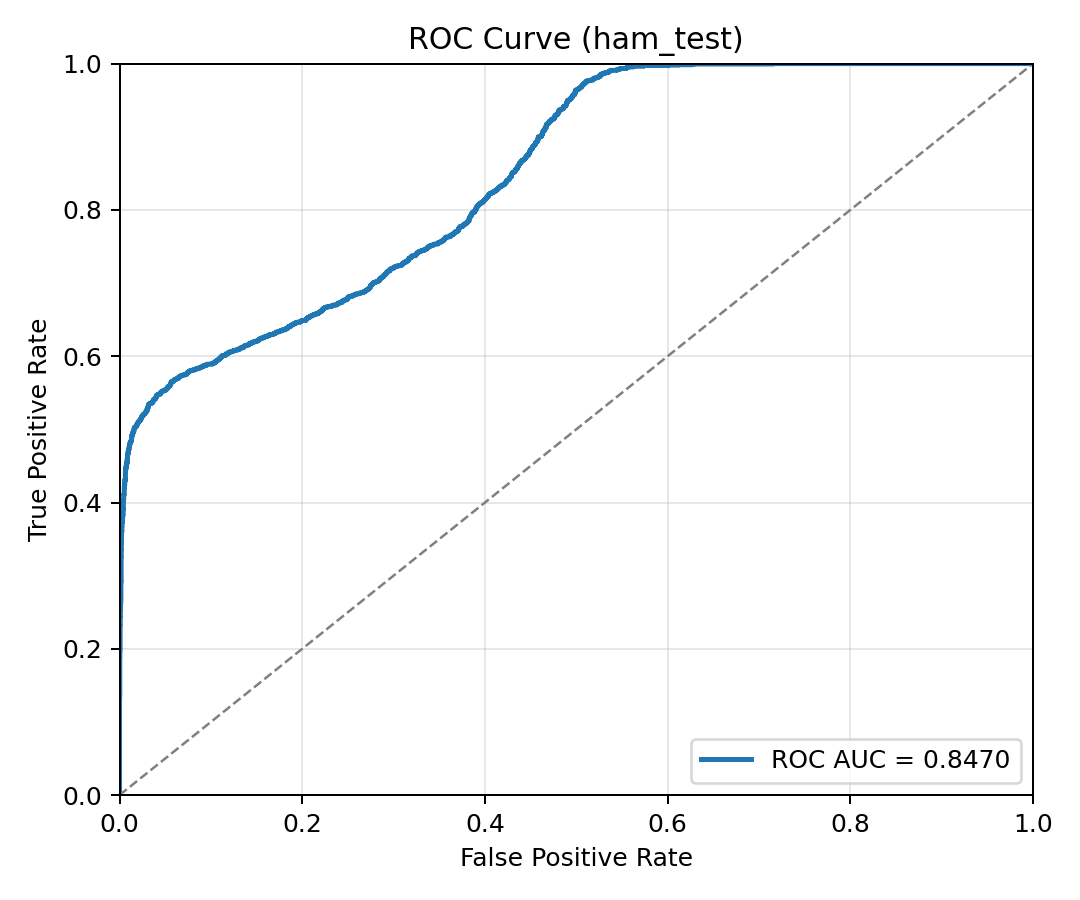

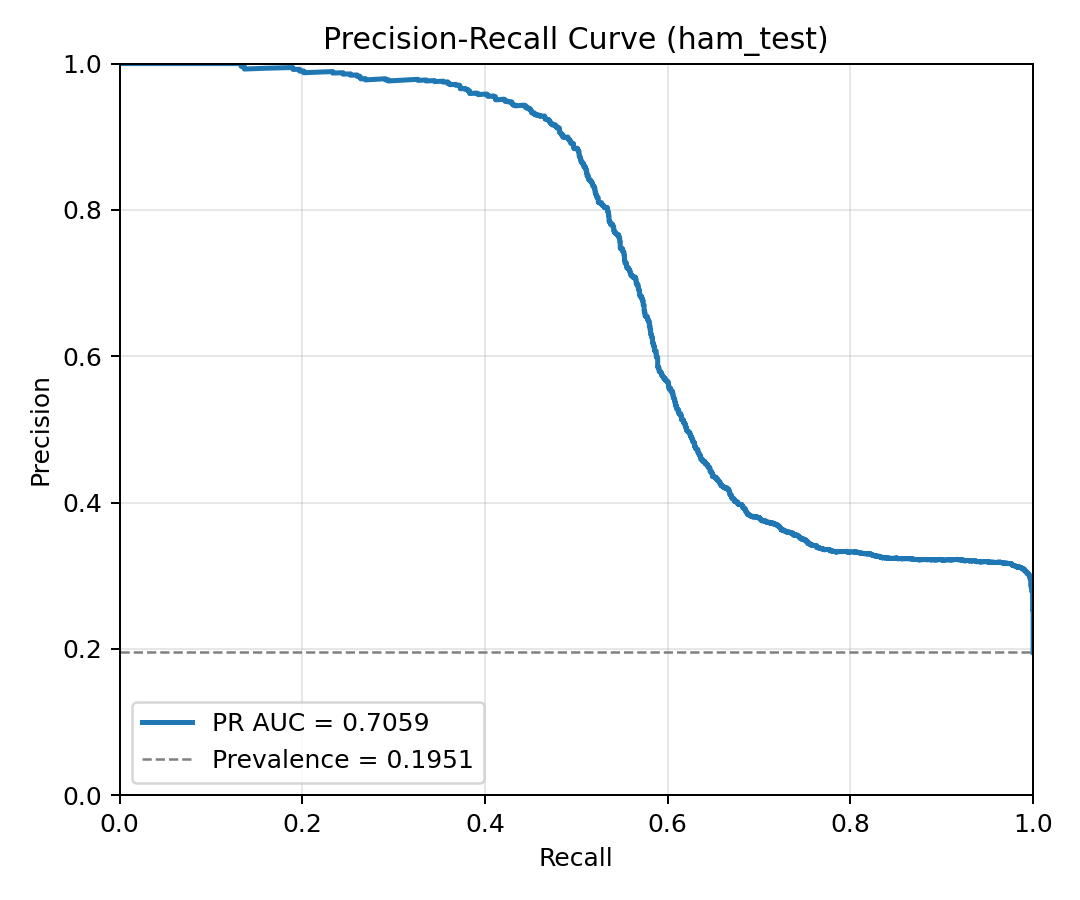

| Vision Transformer (ViT-B16) | 88.96% | 91.01% | 48.16% | 62.99% | 0.8470 | 0.7059 | TN 7968 | FP 93 | FN 1013 | TP 941 |

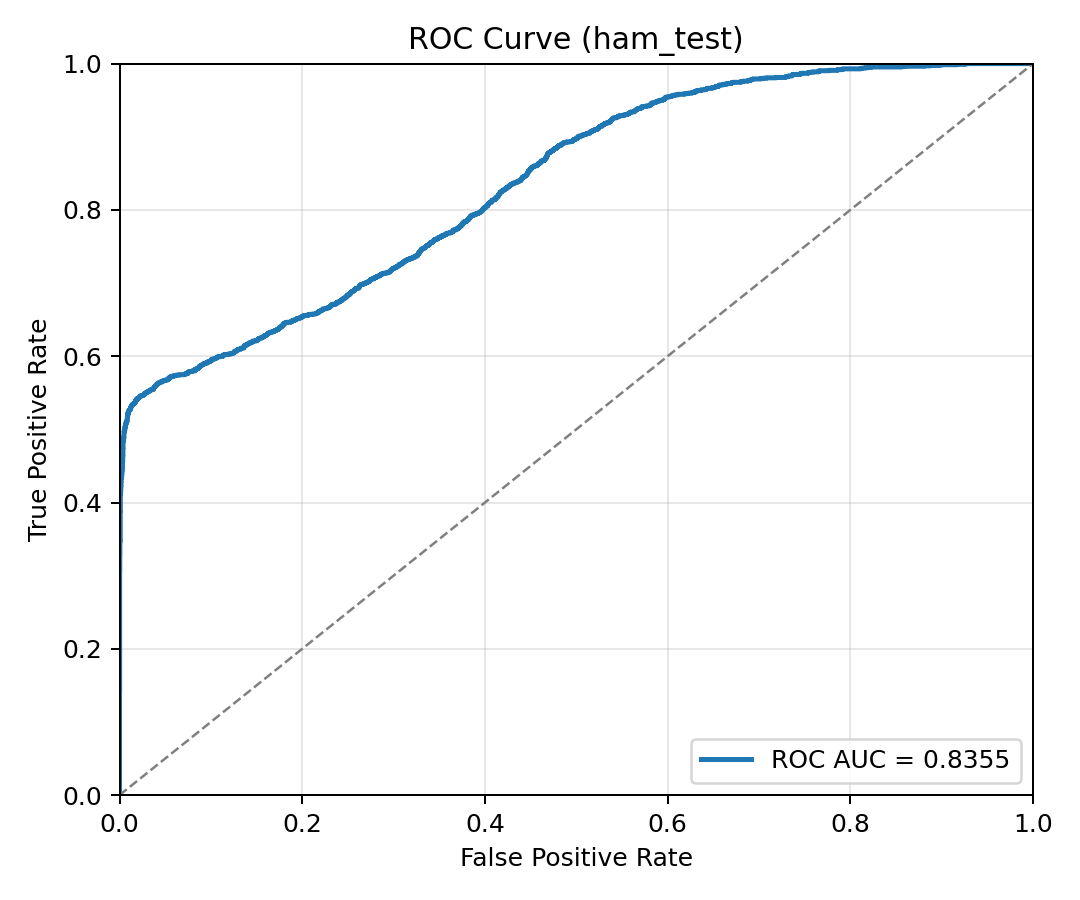

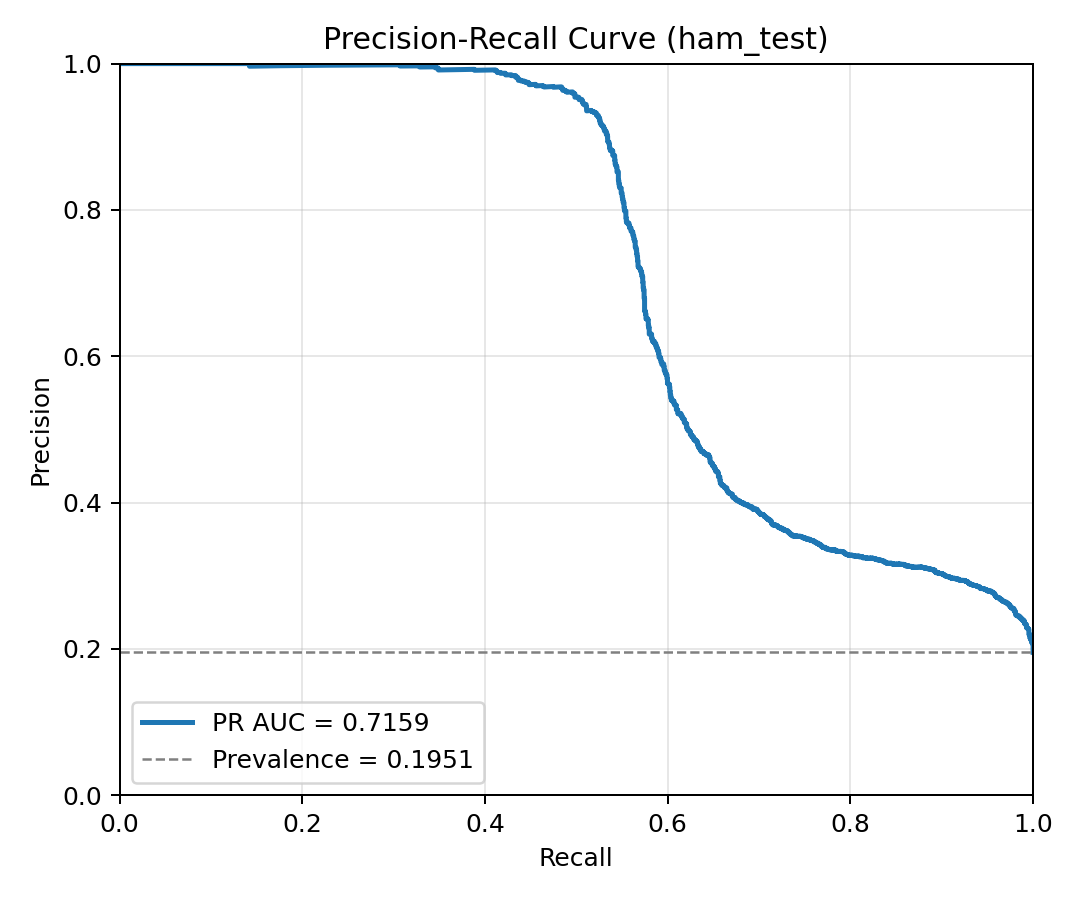

| Vision Transformer (ViT-L16) | 89.85% | 95.00% | 50.61% | 66.04% | 0.8355 | 0.7159 | TN 8009 | FP 52 | FN 965 | TP 989 |

Shift Analysis

| Model | Accuracy Gap | Precision Gap | Recall Gap | F1 Gap | ROC AUC Gap | PR AUC Gap |

|---|---|---|---|---|---|---|

| Baseline CNN | +0.80% | -6.20% | +32.03% | +10.47% | +13.27% | +18.01% |

| Ensemble CNN (5-fold) | -0.28% | -9.97% | +26.64% | +9.90% | +5.61% | +7.72% |

| Vision Transformer (ViT-B16) | +3.45% | -11.19% | +28.75% | +15.35% | +10.84% | +16.54% |

| Vision Transformer (ViT-L16) | +4.02% | -10.55% | +29.83% | +16.36% | +13.03% | +18.55% |

Positive values indicate the model performed better on validation than on external test. This is expected under dataset shift and is one of the core benchmark signals.

Curves

Validation ROC curve on the ISIC split.

Validation precision-recall curve on ISIC.

External-test ROC curve on HAM10000.

External-test precision-recall curve on HAM10000.

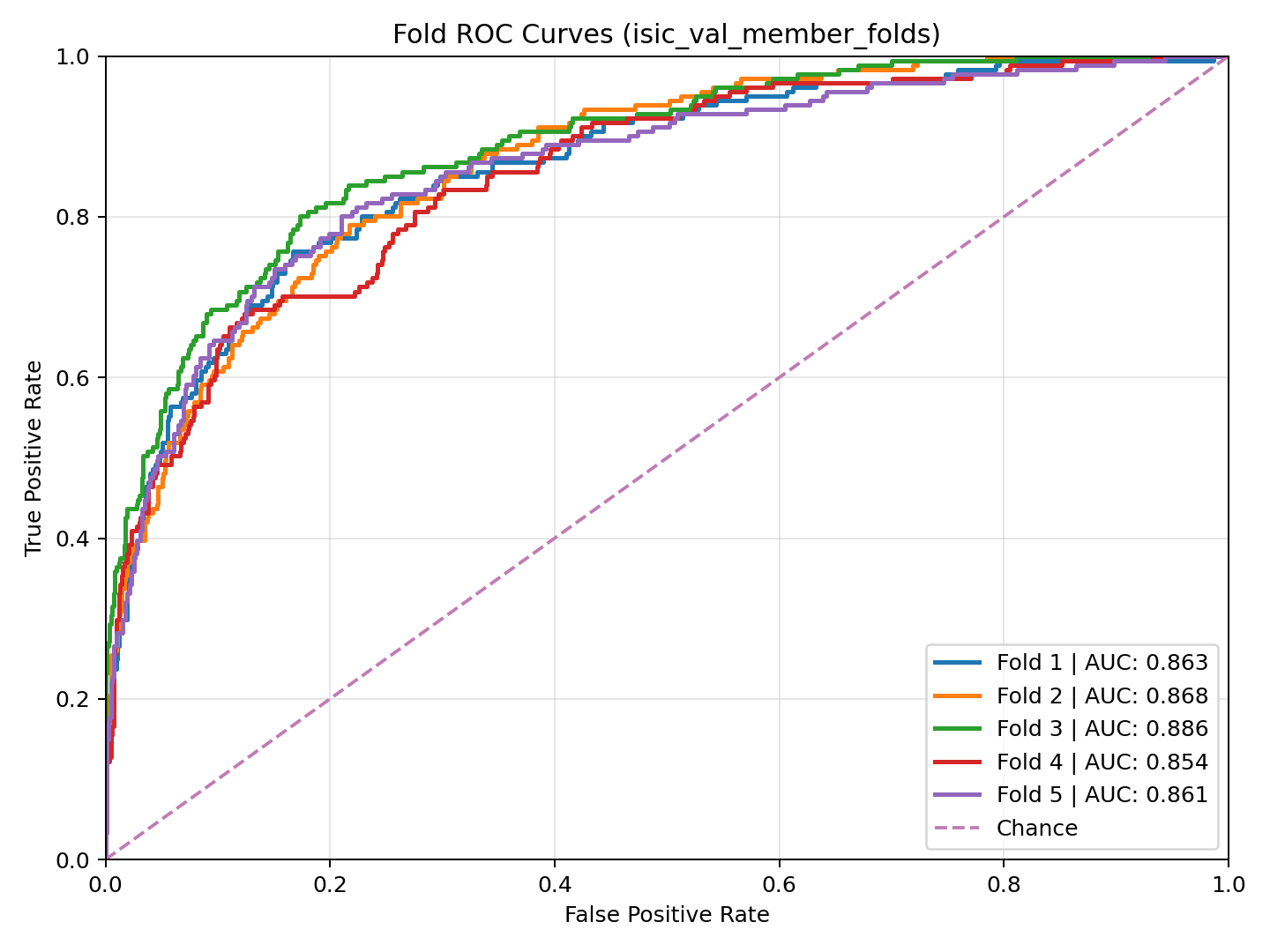

ROC curves of the 5-folds on ISIC validation.

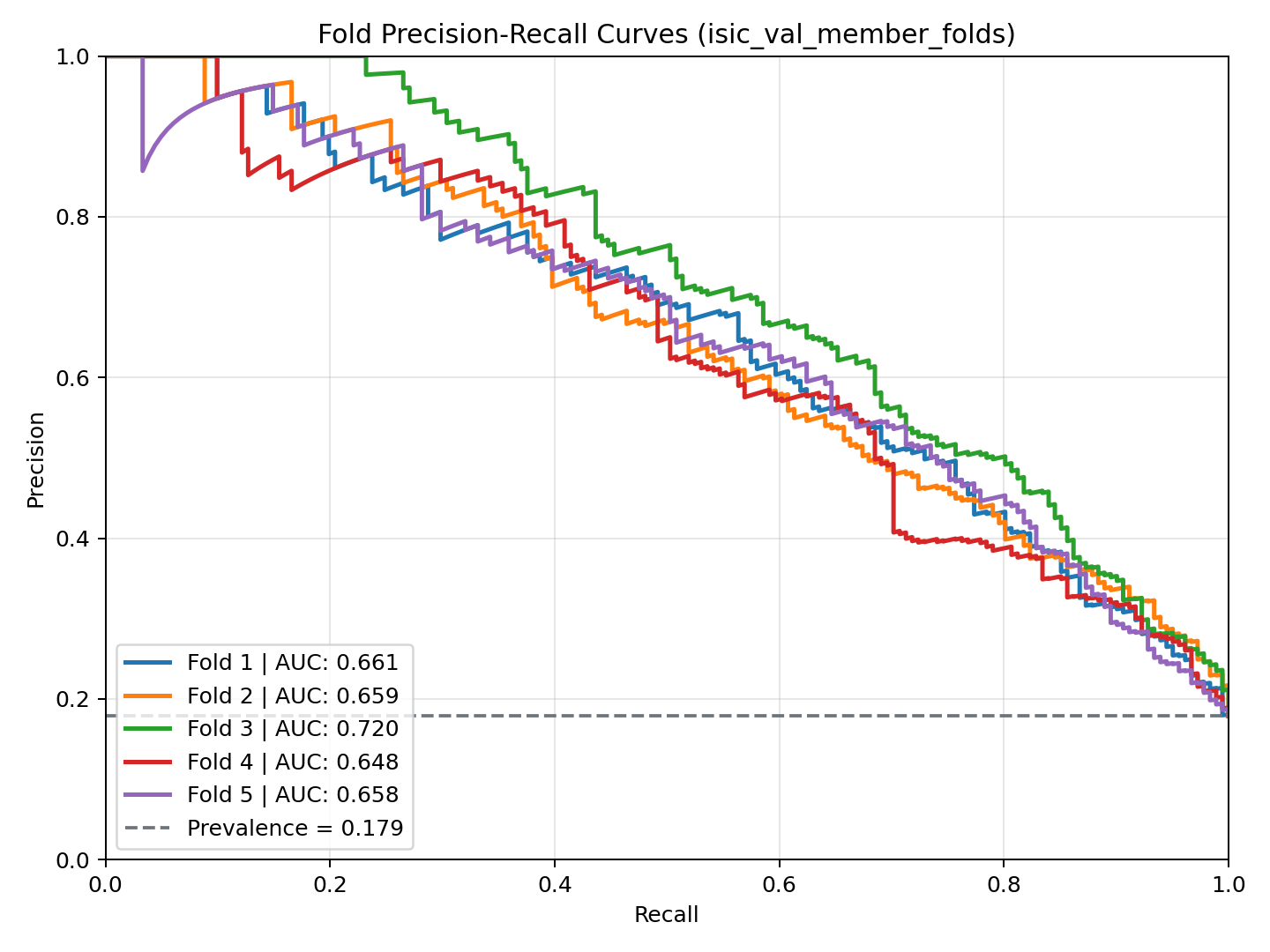

Precision-recall curves of the ISIC 5-folds.

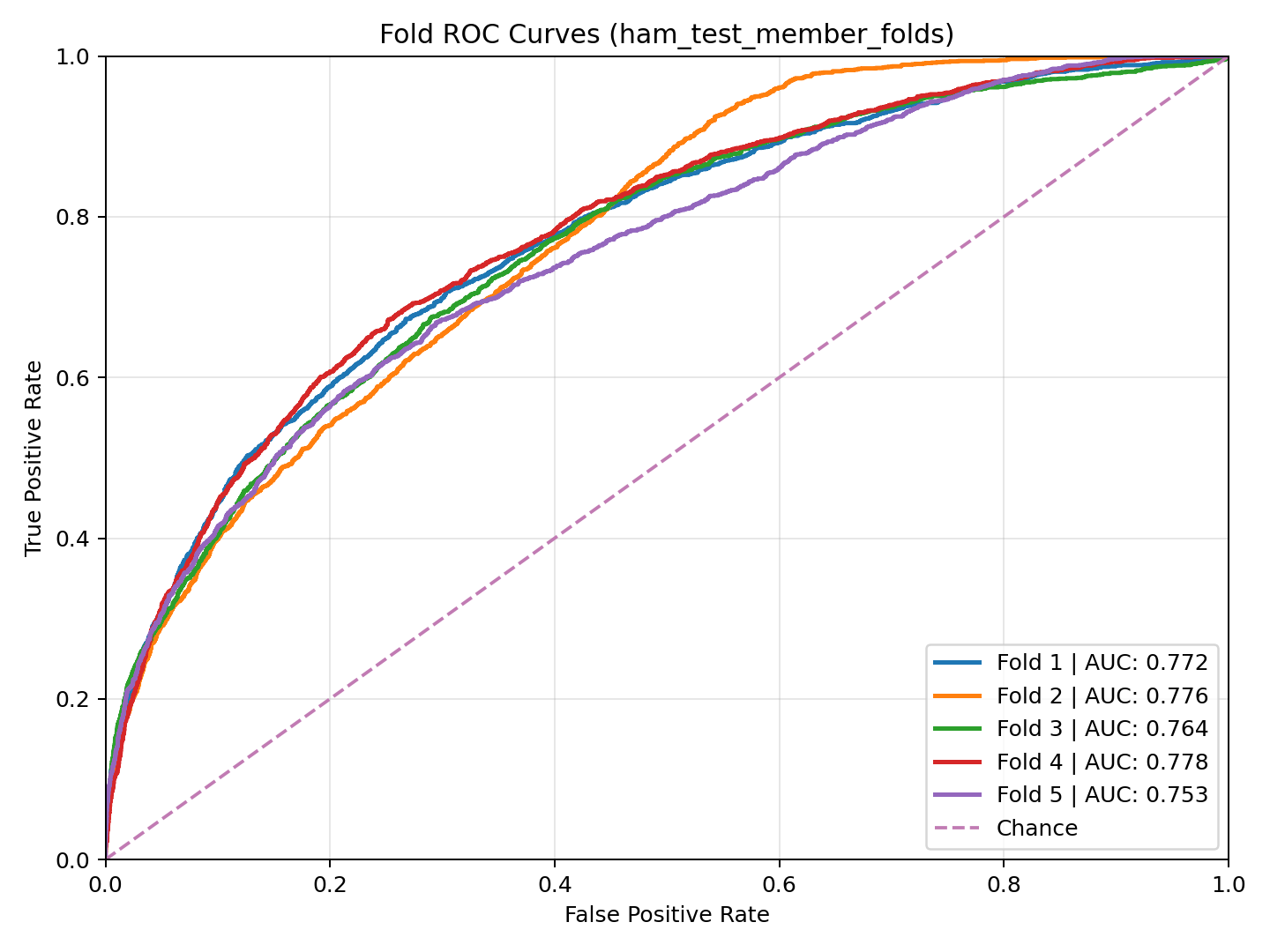

External-test ROC fold curves of the ensemble.

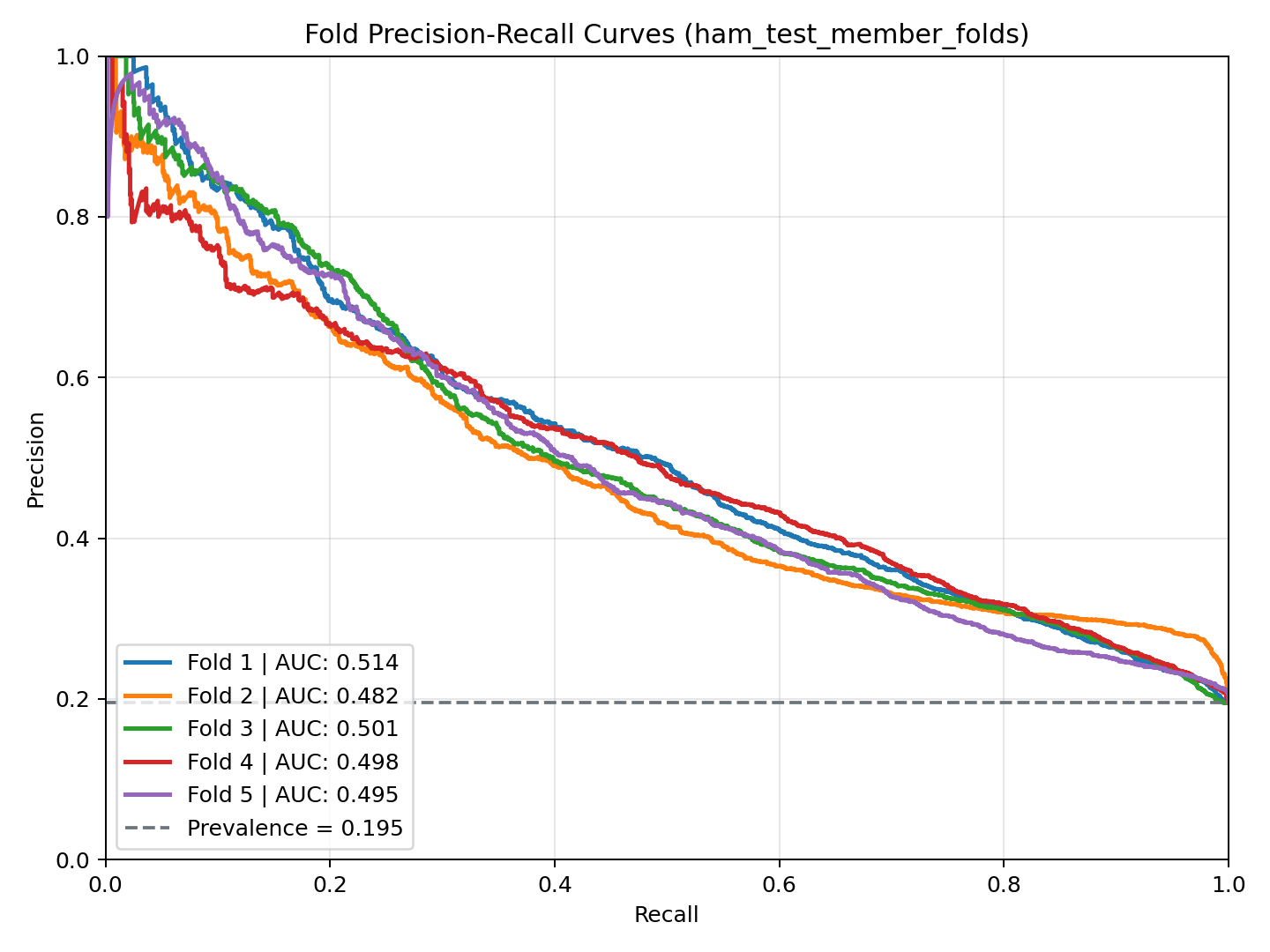

External-test precision-recall fold curves of the ensemble.

Validation ROC curve for ViT-B16 on ISIC.

Validation precision-recall curve for ViT-B16.

External-test ROC curve for ViT-B16 on HAM10000.

External-test precision-recall curve for ViT-B16.

Validation ROC curve for ViT-L16 on ISIC.

Validation precision-recall curve for ViT-L16.

External-test ROC curve for ViT-L16 on HAM10000.

External-test precision-recall curve for ViT-L16.